Built for AI teams

Web data infrastructure for AI

Multi-provider web scraping with built-in fallback and clean, structured output. An end-to-end solution for reliable data.

LLM extraction

Any page into structured JSON

Use ready-made parsers from integrated LLM providers, or build your own - with code or no code.

Raw HTMLshop.example/p/airmax-90

<article class="product">

<h1>Air Max 90 - White</h1>

<span class="price">$129.99</span>

<div class="stock in">In stock</div>

<div class="rating" data-score="4.7">

<span>4.7</span> (1,284 reviews)

</div>

<img src="/img/airmax90.jpg" />

</article>Structured JSONschema-validated

{

"title": "Air Max 90 - White",

"price": 129.99,

"currency": "USD",

"inStock": true,

"rating": 4.7,

"reviewCount": 1284,

"image": "https://shop.example/img/airmax90.jpg"

}How It Works

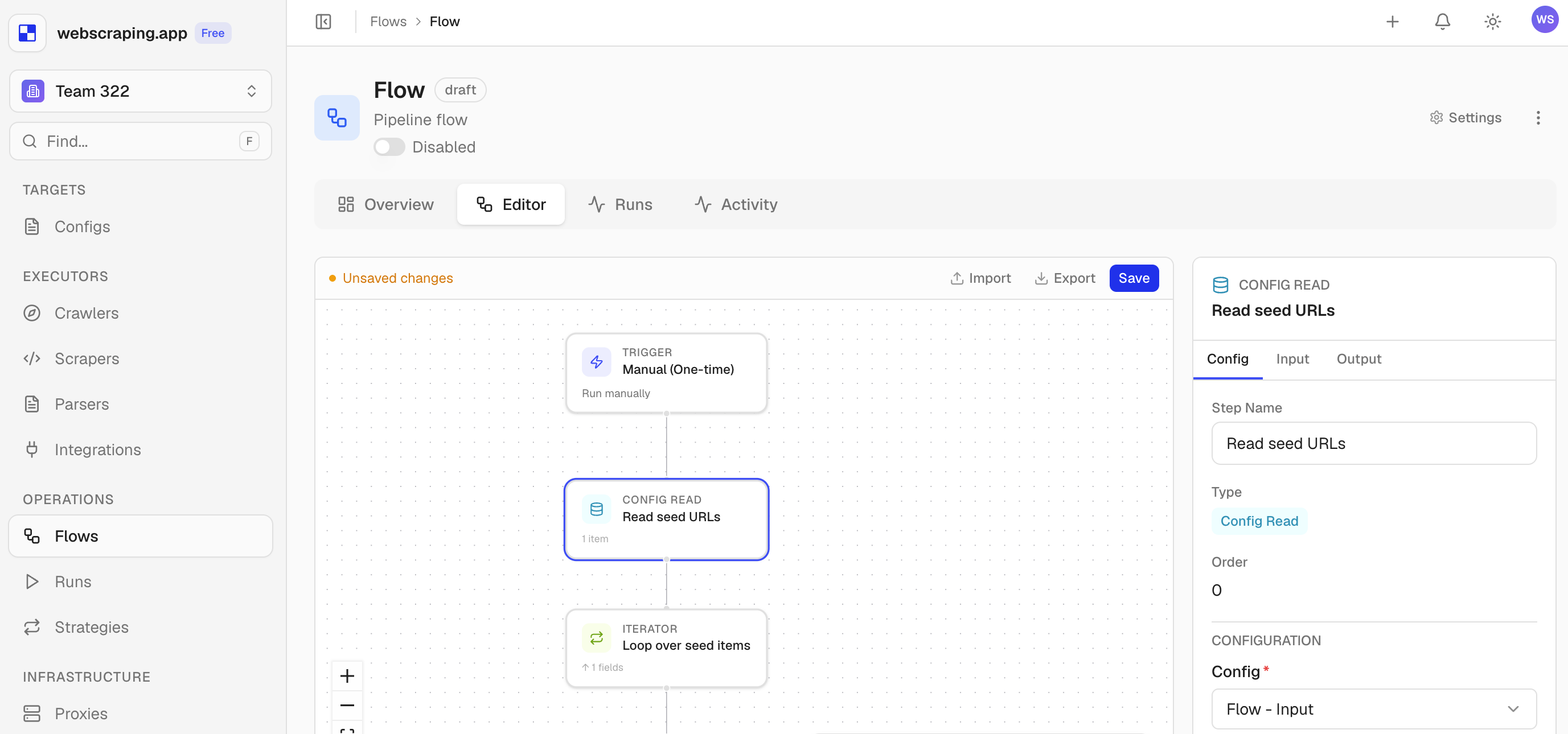

Build a flow. Watch it run.

From URLs to structured data in three steps.

Configure

Connect sources, choose providers, and define inputs and outputs.

Run

We handle routing, retries, and failures across providers.

Monitor

Watch each run live - inspect every step and its output in real time.

Features

Built for reliability, not demos

Your Data Layer

One shared dataset connects every step of your flow.

1

Crawler finds 142 URLs

Writes them to the shared config as input items

2

Scraper processes each URL

Processes each item using your configured providers

3

Results are saved as structured outputs

Use them, export them, or pass them to the next step